Surfacing the Quiet Signals

A lightweight system for regular user insight

Some users give feedback freely.

Others don’t.

Not because they don’t have opinions — but because the room wasn’t built for them.

How do you design a feedback loop that makes space for them?

Not once. But every sprint.

✦ From Ad-Hoc to Intentional

Most feedback comes from the loudest channels or PMs collecting secondhand sentiment. But beneath that surface is always a quieter group: the thoughtful, hesitant, too-busy or too-introverted users.

User contact can be scattered, reactive, dependent on feature rollouts or support escalations. There can be no structure. No rhythm. And crucially, no space to build relationships with users over time.

This changed with a lightweight, repeating structure called User Touchpoints. Designed not just to gather feedback, but to earn it. Inspired by the principles of Continuous Discovery Habits, the goal was to build a sustainable rhythm of small, frequent conversations.

✦ The New System

We used Microsoft Bookings to create a low-barrier scheduling system. Users could self-select a session that fit their time zone and calendar — no emails, no back-and-forth. Sessions were listed with clear purposes and open slots, giving users full autonomy over their involvement.

Sessions are short, focused, and repeatable. They are run directly by design or developers, often 1:1 or in small pairs.

Feature Interviews

Early-stage ideas and upcoming tools

Feature and Design Feedback

Existing Features, Mid-stage visuals and interaction flows

Open Sessions

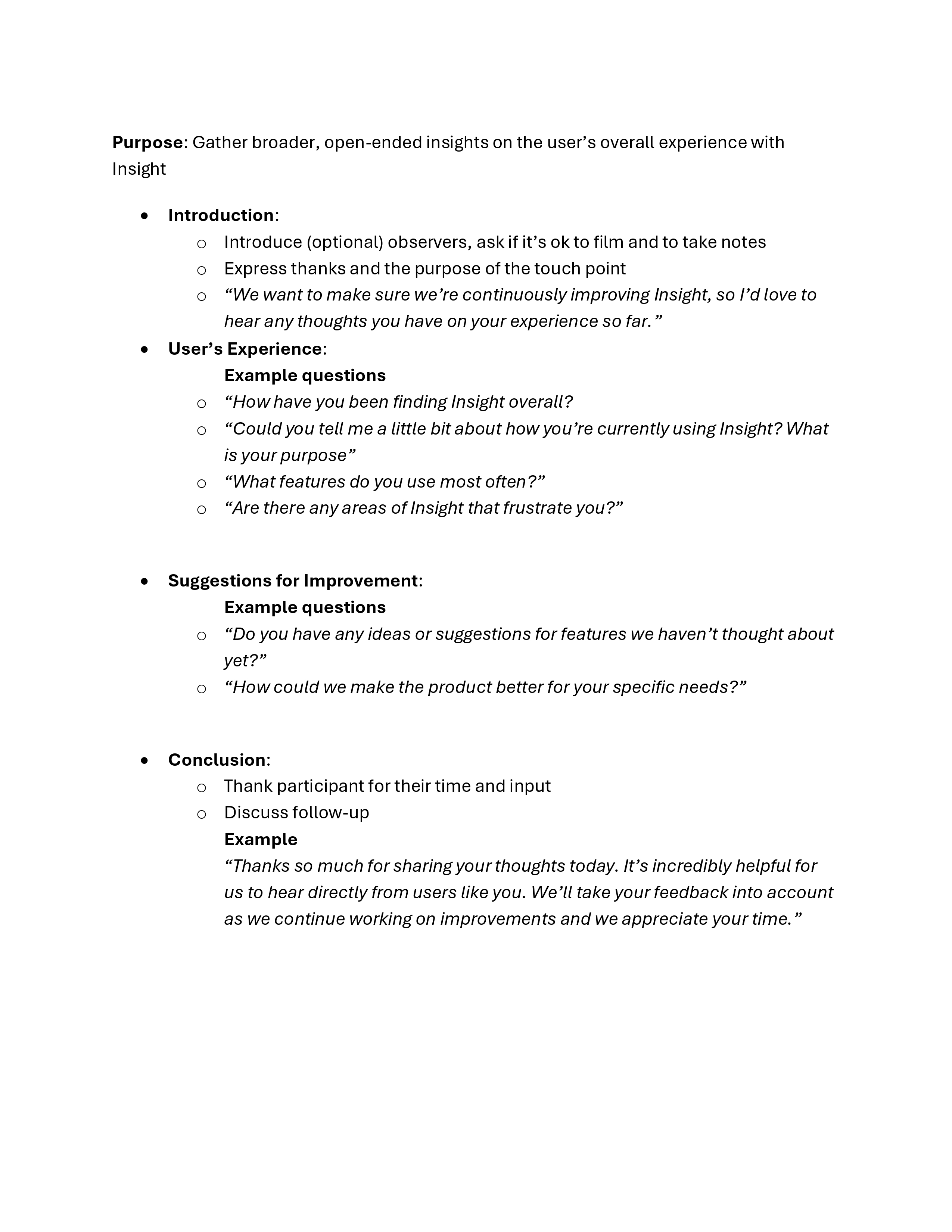

Open-ended questions and observation, often focused on workflows or pain points

✦ Making It a Team Practice

This wasn’t a UX-only effort. As momentum grew, the structure created a safe, guided space for developers and other team members to participate directly.

Initial sessions were run by design, but over time, developers began joining in — and eventually running their own. Support was provided in the form of:

- Scheduling templates

- Conversation prompts

- Debrief guides for capturing and sharing learnings

Instead of asking developers to read research summaries, this approach gave them direct exposure to nuance and context. It changed the way features were discussed and implemented.

✦ A Place for the Quiet Ones

Meaningful results came from the quiet users who, once invited, started showing up on a monthly basis. Developers who never spoke in retros came alive in open feedback sessions. Scientists who never opened support tickets but pointed to inconsistencies that shaped future designs.

These weren’t interviews anymore. They have become conversations that continously shape the product.

Good research is consistent.

We stopped asking: “Do we have time for user research this sprint?”

And started asking: “What kind are we doing this week?”